Introduction to Data-Centric AI

IAP 2024

Typical machine learning classes teach techniques to produce effective models for a given dataset. In real-world applications, data is messy and improving models is not the only way to get better performance. You can also improve the dataset itself rather than treating it as fixed. Data-Centric AI (DCAI) is an emerging science that studies techniques to improve datasets, which is often the best way to improve performance in practical ML applications. While good data scientists have long practiced this manually via ad hoc trial/error and intuition, DCAI considers the improvement of data as a systematic engineering discipline.

This is the first-ever course on DCAI. This class covers algorithms to find and fix common issues in ML data and to construct better datasets, concentrating on data used in supervised learning tasks like classification. All material taught in this course is highly practical, focused on impactful aspects of real-world ML applications, rather than mathematical details of how particular models work. You can take this course to learn practical techniques not covered in most ML classes, which will help mitigate the “garbage in, garbage out” problem that plagues many real-world ML applications.

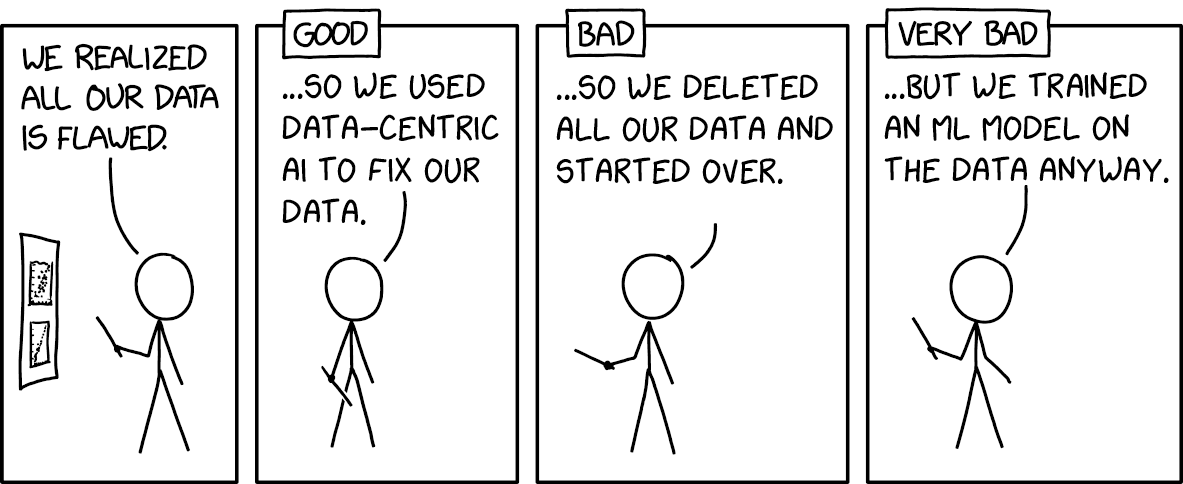

Inspired by XKCD 2494 “Flawed Data”

Syllabus

- 1/16/24: Data-Centric AI vs. Model-Centric AI

- 1/17/24: Label Errors and Confident Learning

- 1/18/24: Advanced Confident Learning, LLM and GenAI applications

- 1/19/24: Class Imbalance, Outliers, and Distribution Shift

- 1/22/24: Dataset Creation and Curation

- 1/23/24: Data-centric Evaluation of ML Models

- 1/24/24: Data Curation for LLMs

Special topics from previous years

- 1/24/23: Growing or Compressing Datasets

- 1/25/23: Interpretability in Data-Centric ML

- 1/26/23: Encoding Human Priors: Data Augmentation and Prompt Engineering

- 1/27/23: Data Privacy and Security

Each lecture has an accompanying lab assignment, a hands-on programming exercise in Python / Jupyter Notebook. You can work on these on your own or in groups. This is a not-for-credit IAP class, so you don’t need to hand in homework.

General information

Dates: Tuesday, January 16 – Friday, January 26, 2024

Lecture: 2-190, 12pm–1pm

Staff: This class is co-taught by Anish, Curtis, and Jonas.

Questions: Post in the #dcai-course channel on this community Slack team (preferred) or email us at dcai@mit.edu.

Prerequisites

Anyone is welcome to take this course, regardless of background. To get the most out of this course, we recommend that you:

- Completed an introductory course in machine learning (like 6.036 / 6.390). To learn this on your own, check out Andrew Ng’s ML course, fast.ai’s ML course, or Dive into Deep Learning.

- Are familiar with Python and its basic data science ecosystem (pandas, NumPy, scikit-learn, and Jupyter Notebook). To learn this on your own, check out Jupyter Notebook 101, Introduction to Pandas, and Python for Data Analysis.

Beyond MIT

We’ve also shared this class beyond MIT in the hopes that others may benefit from these resources. You can find posts and discussions on:

Acknowledgements

We thank Elaine Mello / MIT Open Learning for making it possible for us to record lecture videos, Ellen Reid and Lisa Bella / MIT EECS for supporting the 2024 offering of this class, Kate Weishaar / MIT Office of Experiential Learning for supporting the 2023 offering of this class, and Ashay Athalye / MIT SOUL for editing and subtitling the lecture videos.